Seedream 4.5 vs FLUX.2 Pro: Full Benchmark

TL;DR

FLUX.2 Pro[2] wins overall (4.53 vs 4.42)[3], wins all four quality dimensions, and costs 12.5% less ($0.035 vs $0.040). It's the rare case where cheaper and better overlap. FLUX wins 95 of 200 head-to-heads vs Seedream's 68. Seedream 4.5[1] comes closest on subject & object integrity (4.29 vs 4.33) and fights back on food photography, landscapes, and marketing — but the overall value case favors FLUX.2 Pro.

Overall Scores

Both models sit in the Standard tier of our 18-model benchmark, but FLUX.2 Pro ranks 4th overall while Seedream 4.5 lands at 6th. The 0.113 gap is meaningful — wider than the gap between any two models in the top 5. Both completed all 200 benchmark prompts with no content restrictions.

| # | Model | Avg Score | Cost/Image | Tier |

|---|---|---|---|---|

| 1 | FLUX.2 Pro | 4.53 | $0.035 | Standard |

| 2 | Seedream 4.5 | 4.42 | $0.040 | Standard |

Average weighted score across 200 prompts. Both models completed all prompts.

Dimension-by-Dimension Breakdown

FLUX.2 Pro leads all four dimensions. The standout gap is in physics & logic (+0.29), where FLUX demonstrates stronger spatial reasoning. Seedream 4.5 comes closest on subject & object integrity (4.29 vs 4.33) — a narrow gap, particularly on object detail and scene composition.

| Dimension | FLUX.2 Pro | Seedream 4.5 | Gap | Winner |

|---|---|---|---|---|

| Visual Fidelity | 4.83 | 4.71 | +0.12 | FLUX.2 Pro |

| Physics & Logic | 4.27 | 3.98 | +0.29 | FLUX.2 Pro |

| Subject & Object Integrity | 4.33 | 4.29 | +0.04 | FLUX.2 Pro |

| Instruction Adherence | 4.23 | 4.01 | +0.22 | FLUX.2 Pro |

These averages capture the trend, but specific use cases can diverge dramatically. Seedream wins 34% of individual prompts despite losing every dimension on average — because it dominates particular niches.

Where Model Choice Matters Most

The 0.11 average gap hides extremes. On some prompts, the difference exceeds 2.0 points. Below are six use cases where model choice makes the biggest difference — three where FLUX.2 Pro dominates and three where Seedream 4.5 takes the lead.

Portrait photography

FLUX wins 6 of 10 portrait/creative prompts, Seedream wins 2, 2 ties

prompt-0027

“Portrait showing both ears of person facing camera directly, symmetrical face, neutral expression”

FLUX.2 Pro

5.00

Seedream 4.5

2.55

This is the largest gap in our benchmark — 2.45 points. Seedream misinterpreted the prompt in a way that produced impossible anatomy. FLUX delivered a clean, professional portrait.

Lifestyle & commercial scenes

FLUX wins 12 of 21 narrative scene prompts, Seedream wins 7, 2 ties

prompt-0008

“Person typing on laptop keyboard, all ten fingers positioned naturally over keys, office desk”

FLUX.2 Pro

4.40

Seedream 4.5

2.15

Seedream generated arms passing through the laptop screen — a spatial relationship failure that makes the image unusable. FLUX kept the anatomy and object interactions physically plausible.

Food photography

Seedream wins 4 of 5 food prompts, FLUX wins 1

prompt-0099

“Overhead food photography of a fully set Thanksgiving dinner table for eight guests, turkey as centerpiece on an elevated platter showing golden...”

Seedream 4.5

4.45

FLUX.2 Pro

3.35

Complex multi-object food scenes are Seedream's strongest niche. FLUX rendered only half the requested place settings and made object coherence errors — placing napkins on food, inconsistent cutlery shapes. Seedream handled the full scene specification.

Astrophotography & landscape

Seedream wins most astrophotography and landscape prompts where technical cleanliness matters

prompt-0074

“Night photography of stars, zero noise, pinpoint stars not smeared, Milky Way visible, foreground silhouette”

Seedream 4.5

5.00

FLUX.2 Pro

4.08

The prompt explicitly requested “zero noise” — a technical specification that Seedream nailed perfectly. FLUX produced visible grain, directly contradicting the core requirement. For technical photography with strict quality specs, Seedream is more reliable.

Simple compositions

Seedream wins 4 of 7 stock/general prompts, FLUX wins 2, 1 tie

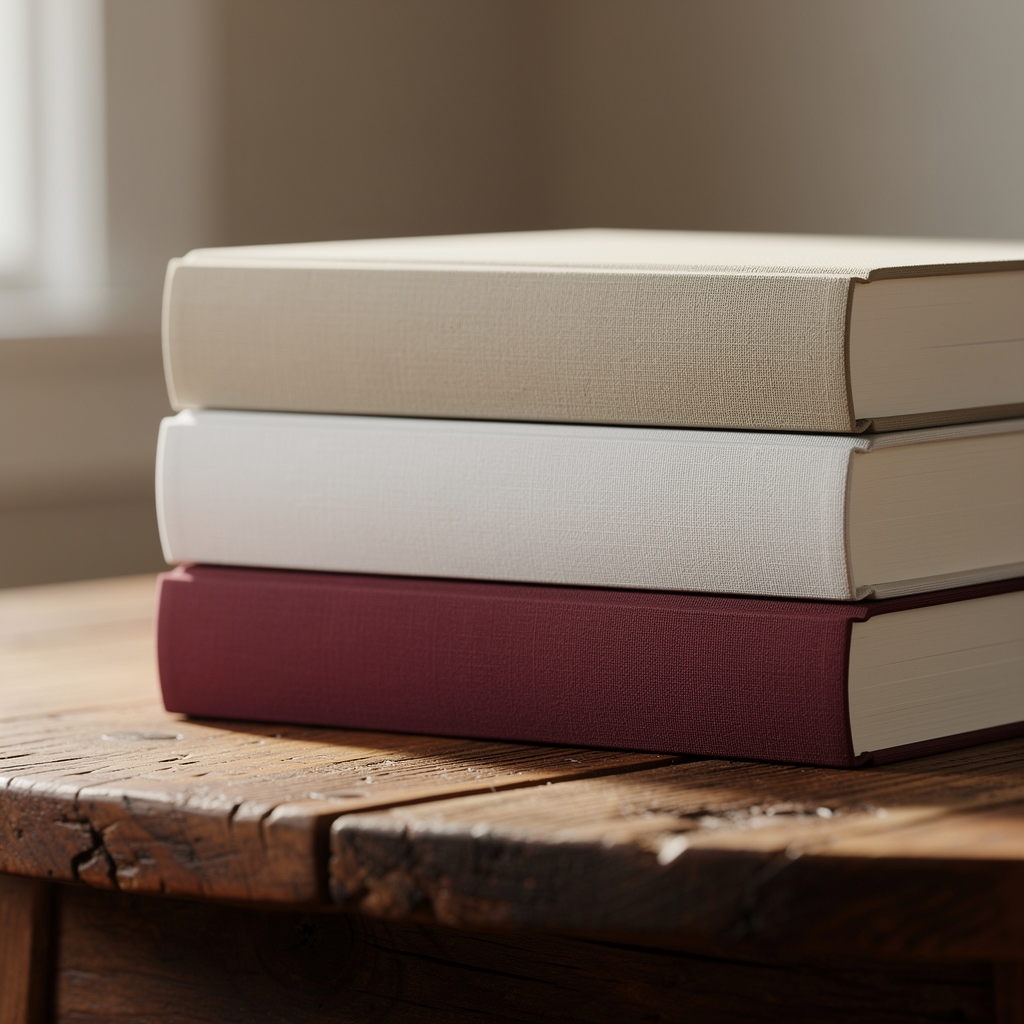

prompt-0011

“Stack of five books on a wooden table”

Seedream 4.5

5.00

FLUX.2 Pro

3.99

A surprisingly simple failure — FLUX generated 3 books instead of 5. Counting accuracy on basic prompts is one area where Seedream is more reliable. The irony: FLUX handles complex human anatomy better, but stumbles on counting objects.

Beauty & editorial

FLUX wins 3 of 5 fashion prompts, Seedream wins 2

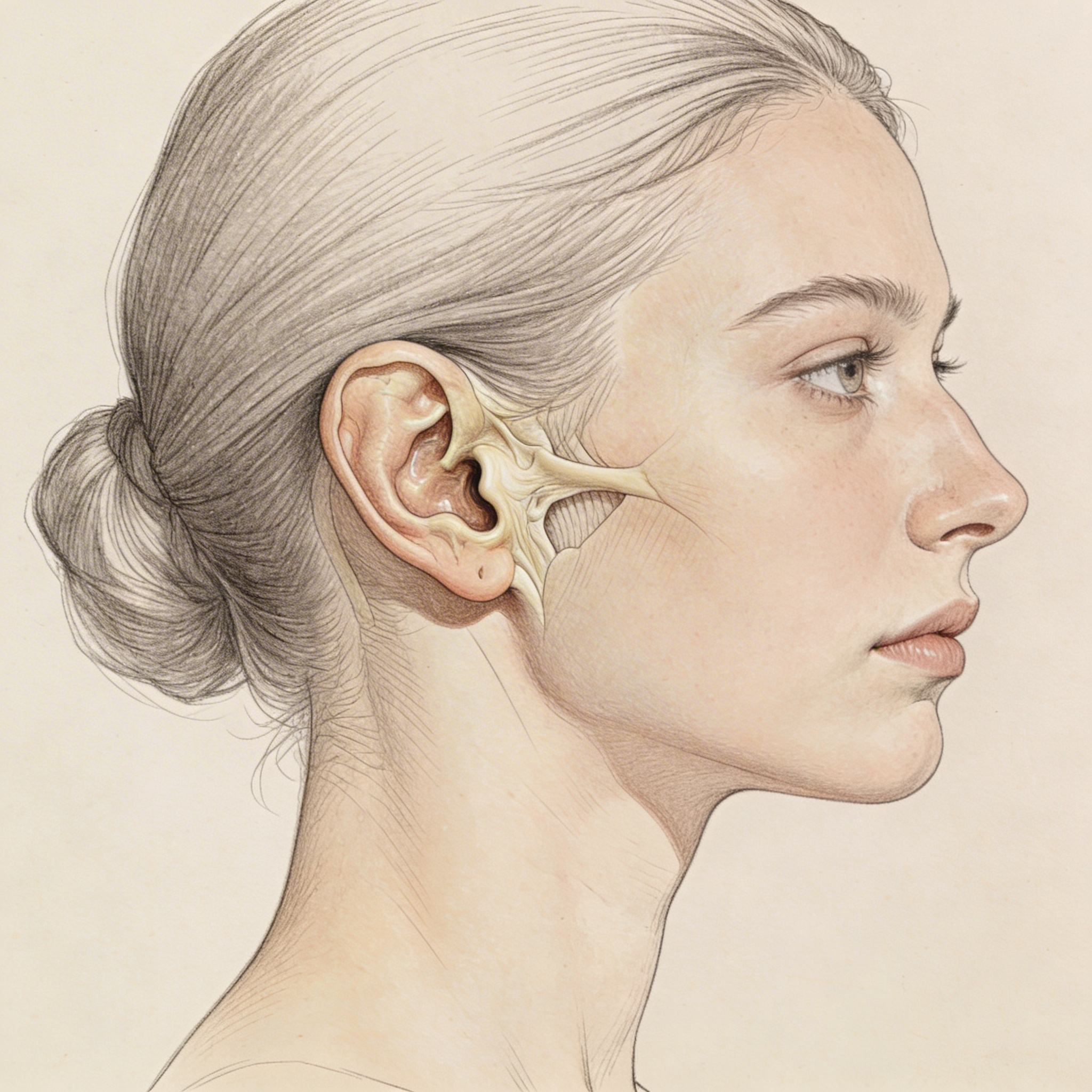

prompt-0025

“Profile view of a woman with detailed ear anatomy visible, hair tucked behind ear, elegant neck”

FLUX.2 Pro

5.00

Seedream 4.5

3.30

Ear anatomy is a known challenge for AI image models. FLUX rendered it correctly; Seedream produced a malformed ear with cartilage stretching onto the cheek. For beauty and editorial photography where anatomical accuracy is critical, FLUX is the safer choice.

Prompt-Level Results

Across all 200 prompts, FLUX.2 Pro wins 95 while Seedream 4.5 wins 68 — with 37 ties. FLUX wins nearly half of all matchups, giving it a comfortable but not overwhelming lead.

95

FLUX wins

37

Ties

68

Seedream wins

A “win” is defined as a score difference greater than 0.01 on a given prompt.

The Value Equation

FLUX.2 Pro is the rare model that is both cheaper and better. At $0.035 vs $0.040, you save 12.5% per image while getting a 2.5% higher average score. That works out to 17.2% more quality per dollar.

| Metric | FLUX.2 Pro | Seedream 4.5 |

|---|---|---|

| Average Score | 4.529 | 4.416 |

| Cost per Image | $0.035 | $0.040 |

| Quality per Dollar | 129.4 | 110.4 |

| Median Score | 4.630 | 4.550 |

| Worst Score | 3.17 | 2.15 |

The worst-score comparison is notable: Seedream's floor (2.15) is significantly lower than FLUX's (3.17). FLUX is not just better on average — it's more consistent and less likely to produce catastrophic failures.

Strengths and Limitations

FLUX.2 Pro

Strengths

- +Wins overall (4.53) — wins 47.5% of head-to-heads

- +Cheaper ($0.035 vs $0.040) — 12.5% less per image, 17.2% more quality per dollar

- +Far better human anatomy and portrait accuracy

- +Higher consistency floor — worst score 3.17 vs Seedream's 2.15

- +Wins all 4 quality dimensions (VF, PL, SI, IA)

Limitations

- −Counting errors on simple prompts (e.g., 3 books instead of 5)

- −Weaker on food photography (wins only 1 of 5 food prompts)

- −Noise/grain in technical photography with strict quality specs

Seedream 4.5

Strengths

- +Closest on subject & object integrity (4.29 vs 4.33) — competitive on object detail and scene composition

- +Dominates food photography (wins 4 of 5), marketing/branding (wins 3 of 6)

- +Stronger astrophotography and landscape scenes

- +Better counting accuracy on simple compositions

- +Handles complex multi-object table scenes well

Limitations

- −Lower overall score (4.42 vs 4.53) and 12.5% more expensive

- −Severe anatomy failures — arms clipping through objects, split faces

- −Lower consistency floor (2.15 minimum) means more catastrophic outputs

- −Loses all 4 quality dimensions on average (VF, PL, SI, IA)

The Verdict

Choose FLUX.2 Pro if...

You work with portraits, human subjects, commercial lifestyle scenes, character design, or any use case requiring reliable anatomy. It's cheaper, higher scoring, and more consistent — the default choice for most workflows.

Choose Seedream 4.5 if...

You specialize in food photography, landscape/astrophotography, or marketing materials. Seedream excels at multi-object scene composition and technical photography with strict quality specifications. It also counts objects more reliably.

Bottom line

FLUX.2 Pro is the better default. It's cheaper, scores higher, and is more consistent. But Seedream 4.5 isn't a bad model — it has genuine strengths in specific niches that FLUX can't match. If you know your use case falls in Seedream's wheelhouse, it's worth considering.

Which Model Wins for Your Specific Prompt?

FLUX.2 Pro wins overall, but Seedream 4.5 dominates food and landscape work. Enter your prompt to see which model scores highest for your use case.

Try the recommendation engineRelated Benchmarks

FLUX.2 Pro also ranks 3rd for text rendering — see our text rendering benchmark for the full 18-model comparison.

Curious how FLUX.2 Pro compares to its siblings? Our Flux family comparison covers all 5 Flux tiers from $0.001 to $0.070.

For the top of the leaderboard, see our GPT Image 1.5 vs Nano Banana Pro comparison — the two premium models that score above both FLUX.2 Pro and Seedream 4.5.

At the same $0.040 price point, see how Ideogram 3.0 compares — it finishes last among the four models at this price.

Sources & References

All external sources were verified as of April 2026. Ratings and metrics reflect the most recent data available at time of review.

- ByteDance Seed - Seedream 4.5 Model(seed.bytedance.com)

- Black Forest Labs - FLUX.2 Pro (Official)(blackforestlabs.ai)

- Artificial Analysis - AI Image Generation Leaderboard(artificialanalysis.ai)

- Artificial Analysis - FLUX.2 Pro Benchmarks(artificialanalysis.ai)

- fal.ai - FLUX.2 Pro API & Pricing(fal.ai)

- Replicate - FLUX Model Pricing(replicate.com)

Recommended Benchmarks

- FLUX.2 Pro vs Ideogram 3 vs Seedream 4.5: Standard-Tier ShowdownThe three most popular Standard-tier models compared. FLUX wins overall AND costs less. Ideogram finishes last.

- Seedream 3.0 vs 4.0 vs 4.5: Full Family ComparisonSeedream 4.5 leads but 3.0 at $0.018 delivers 97.7% of the quality. Skip 4.0 — worst value in the family.

- Best AI Image Generator 2026: 18 Models RankedGPT Image 1.5 leads, but FLUX.2 Pro at $0.035 delivers 97.6% of the quality at 26% of the price. Full 18-model rankings.

Related Vibedex Benchmarks

Zapier vs n8n 2026: Breadth vs Self-Host Freedom

Zapier: 8,000+ integrations, Copilot for SMB ops. n8n: free self-host, Code node, dev-native escape hatches — and 4 critical 2026 CVEs. Which one breaks your ops first?

Head-to-HeadVeo-3.1 vs Seedance-1.5: Is $2.68 Worth it?

Is 0.3 points of quality worth paying 6x more? We break down the motion, audio, and consistency differences.

RoundupsBest AI Image Generator 2026: 18 Models Ranked

GPT Image 1.5 leads, but FLUX.2 Pro at $0.035 delivers 97.6% of the quality at 26% of the price. Full 18-model rankings.

Methodology: Rankings and scores in this article are based on VibeDex's independent benchmarks. Models are evaluated by AI-powered judges across multiple quality dimensions with scores weighted by prompt intent. See our full methodology

FAQ

Is FLUX.2 Pro better than Seedream 4.5?

Yes, across 200 prompts FLUX.2 Pro scored 4.53 vs Seedream 4.5's 4.42 and won 47.5% of head-to-head matchups vs 34%. FLUX leads all four quality dimensions, with the largest gap in physics & logic (+0.29). Seedream 4.5 comes closest on subject & object integrity (4.29 vs 4.33) and excels in specific niches including food photography, astrophotography, and marketing materials.

Which is cheaper, FLUX.2 Pro or Seedream 4.5?

FLUX.2 Pro is cheaper at $0.035/image vs $0.040/image for Seedream 4.5. That makes FLUX.2 Pro 12.5% cheaper per image while also scoring higher — delivering 17.2% more quality per dollar.

How many prompts were tested?

Both models were tested on all 200 benchmark prompts covering photorealism, illustration, typography, product photography, concept art, architecture, and edge cases. Both completed all 200 prompts with no content restrictions or failures.

Where does Seedream 4.5 beat FLUX.2 Pro?

Seedream 4.5 wins on food & beverage photography (4 of 5 prompts), landscape/environment scenes, marketing/branding materials, and stock photography. It also excels at complex multi-object food scenes and astrophotography where technical cleanliness matters more than human anatomy.

Find the best model for your prompt

VibeDex analyzes your prompt and recommends the best AI image model based on what your specific image demands.

Try VibeDex →