GPT Image 1.5 vs Nano Banana Pro: Full Benchmark Comparison

TL;DR

GPT Image 1.5[1] leads overall by a hair (4.64 vs 4.62) and wins more individual prompts (73 vs 68, with 52 ties). But Nano Banana Pro[2] wins three of four quality dimensions — visual fidelity (4.99 vs 4.90), physics (4.66 vs 4.34), and subject integrity (4.51 vs 4.44) — while instruction adherence is tied (4.63 each). At nearly identical pricing ($0.133 vs $0.138), the choice comes down to your use case, not your budget. Note: GPT couldn't generate 7 of our 200 prompts due to content policy restrictions.

Recommended Benchmarks

- Best AI Image Generator 2026: 18 Models RankedGPT Image 1.5 leads, but FLUX.2 Pro at $0.035 delivers 97.6% of the quality at 26% of the price. Full 18-model rankings.

- AI Image Generator Cost vs Quality (2026)Every model's price mapped against quality. FLUX.2 Pro sits on the efficiency frontier. Two $0.080 premiums are the worst value.

- Best AI Image Generator for Portraits (2026)GPT Image 1.5 leads portraits at 4.72/5. Nano Banana Pro close at 4.70. FLUX.2 Pro best value. Full face/skin benchmark.

- Best Premium AI Image Generator 2026: Is Expensive Worth It?GPT Image 1.5 leads premium, but 2 of 5 premium models rank in the bottom 3. The premium tier is a tale of two halves.

Overall Scores

GPT Image 1.5 and Nano Banana Pro are the two highest-rated models in our 18-model benchmark, separated by just 0.023 points on 193 shared prompts. Both score above 4.6 — well ahead of the third-place model. GPT was unable to generate 7 of our 200 benchmark prompts due to content policy restrictions, so scores are compared only on the 193 prompts both models completed.

| # | Model | Avg Score | Cost/Image | Tier |

|---|---|---|---|---|

| 1 | GPT Image 1.5 | 4.64 | $0.133 | Premium |

| 2 | Nano Banana Pro | 4.62 | $0.138 | Premium |

Average weighted score across 193 shared prompts. NBP completed all 200 prompts; GPT refused 7.

Dimension-by-Dimension Breakdown

Nano Banana Pro wins three of four dimensions. The largest gap is physics & logic (4.66 vs 4.34), where NBP renders materials and physical interactions more accurately. NBP also leads on visual fidelity (4.99 vs 4.90) and subject integrity (4.51 vs 4.44). Instruction adherence is tied at 4.63. Despite losing three dimensions, GPT Image 1.5 wins more individual prompts overall.

| Dimension | GPT Image 1.5 | Nano Banana Pro | Winner |

|---|---|---|---|

| Visual Fidelity | 4.90 | 4.99 | Nano Banana Pro |

| Physics & Logic | 4.34 | 4.66 | Nano Banana Pro |

| Subject Integrity | 4.44 | 4.51 | Nano Banana Pro |

| Instruction Adherence | 4.63 | 4.63 | Tie |

These averages tell part of the story — but within each dimension, specific use cases can diverge dramatically. See the examples below for where the gap is largest.

Where Model Choice Matters Most

They score within 0.01 of each other on average — but averages hide the extremes. On specific prompts, the gap can exceed 1.0 points. Below are six use cases where model choice makes the biggest difference, selected for the largest score gaps in our benchmark. Each example includes win rate context so you can judge whether the pattern holds broadly or is specific to the prompt shown.

Marketing & branding

GPT wins 3 of 6 marketing prompts, NBP wins 2, 1 tie

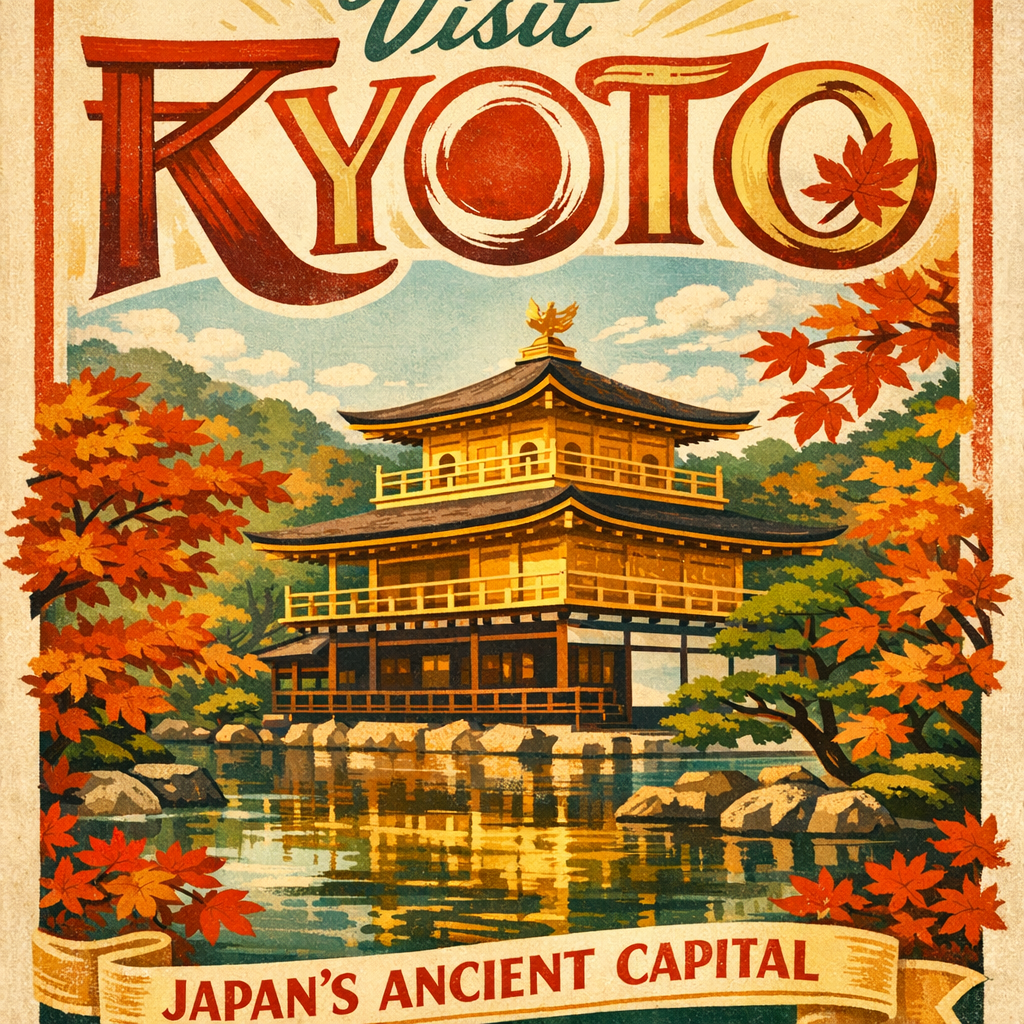

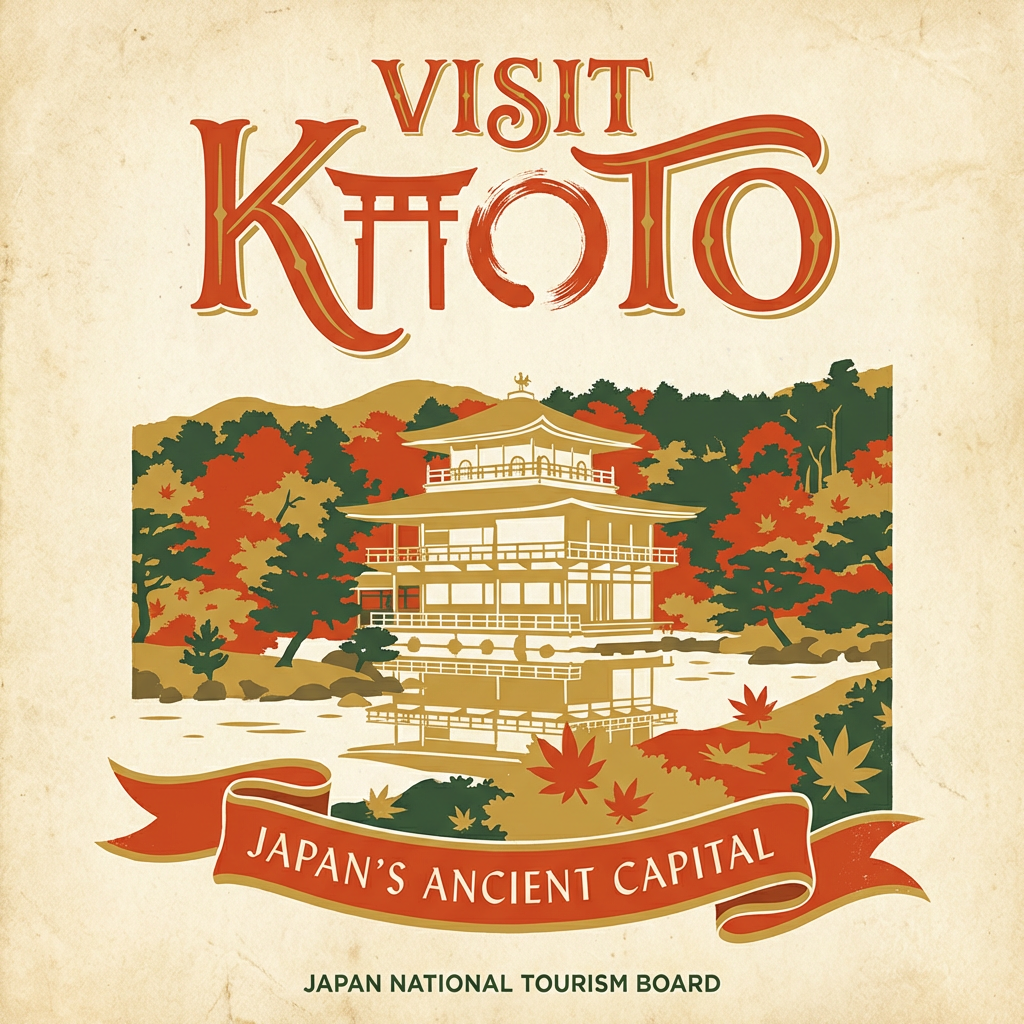

prompt-0146

“Hand-lettered illustration for a vintage-style travel poster advertising VISIT KYOTO in large decorative type at the top, rendered in a retro 1950s...”

GPT Image 1.5

4.93

Nano Banana Pro

3.93

GPT nailed the decorative typography — correctly integrating the torii gate into the K letterform. NBP produced an equally beautiful poster but misplaced the decorative element, making the title hard to read.

Architectural visualization

NBP wins 6 of 10 architecture prompts, GPT wins 3, 1 tie

prompt-0158

“Architectural visualization 3D render using a vertical cutaway section view of a five-story modern apartment building, slicing through the center to...”

GPT Image 1.5

3.51

Nano Banana Pro

5.00

This prompt specifies different residents on each floor. NBP placed every lifestyle detail on the correct floor. GPT swapped the floor contents and missed the double-height penthouse ceilings — a 1.49-point gap, the largest in our benchmark.

Character design & concept art

GPT wins 11 of 15 character/concept art prompts, NBP wins 4

prompt-0119

“Concept art turnaround sheet of a post-apocalyptic wanderer showing front, side, and three-quarter views, consistent proportions across all views with...”

GPT Image 1.5

4.43

Nano Banana Pro

3.37

Turnaround sheets require consistency across views — the same details on the same side in every pose. NBP rendered each view beautifully in isolation, but the prosthetic leg switched sides between views and the scar appeared on the wrong cheek. GPT kept details consistent.

Product photography

GPT wins 5 of 10 product prompts, NBP wins 4, 1 tie — but on detailed luxury objects, NBP has a clear edge

prompt-0121

“Commercial product photograph of a luxury Swiss automatic watch on a polished obsidian surface, the dial showing correct hour marker placement at all...”

GPT Image 1.5

3.55

Nano Banana Pro

4.30

Both models produced stunning commercial-grade photos, but the details diverged. GPT rendered impossible geometry (crown visible in the caseback view) while NBP maintained more plausible object structure. Neither perfectly rendered the intricate watch movement — a frontier challenge for all AI image models.

Food & hospitality

NBP wins 3 of 5 food prompts, GPT wins 2 — but for complex scenes with human interaction, GPT has the advantage

prompt-0133

“Busy Saturday morning brunch restaurant scene, a waiter carrying a tray of three plated dishes navigating between occupied tables, the tray balanced...”

GPT Image 1.5

4.80

Nano Banana Pro

4.28

The food looks great in both images. The difference is the waiter — GPT rendered anatomically correct hand positioning for one-handed tray carrying, while NBP produced rubbery wrists and malformed fingers. When a scene combines food with human interaction, anatomy accuracy becomes the deciding factor.

Fashion & editorial

NBP wins 2 of 5 fashion prompts, GPT wins 1, 2 ties — NBP averages 4.70 vs GPT's 4.48

prompt-0109

“High fashion editorial photograph of a model emerging from a swimming pool at twilight, water cascading off a metallic gold lame gown that clings to...”

GPT Image 1.5

3.56

Nano Banana Pro

4.16

Both models struggled with the wet footprints — a challenging physics detail. GPT's footprints point the wrong direction (physics scored 2/5), while NBP's are too dark but directionally correct. NBP also left studio lights visible in the background, but the overall image held together better.

Prompt-Level Results

Across 193 shared prompts, GPT Image 1.5 wins 73 while Nano Banana Pro wins 68 — with 52 ties. Neither model dominates; the winner depends on the specific prompt.

73

GPT wins

52

Ties

68

NBP wins

A “win” is defined as a score difference greater than 0.01 on a given prompt.

GPT's Content Policy Restrictions

GPT Image 1.5 refused to generate 7 of our 200 benchmark prompts due to OpenAI's content safety policies. Nano Banana Pro completed all 200. The rejected prompts fell into two categories:

Human figures in dynamic poses (4 prompts)

- Two armored knights in combat with anatomical detail

- Street dancer in a breakdance windmill with muscle detail

- Ballet performer mid-grand-jeté in abandoned subway

- Cybernetic martial artist character sheet with biomechanical detail

Anime-style illustrations (3 prompts)

- Anime sunset scene with cherry blossoms (Makoto Shinkai style)

- Magical girl character in transformation pose

- Fantasy floating shrine above bioluminescent ocean

This matters if your workflow involves detailed human anatomy, action poses, or anime-style content. NBP handled all of these without issue. The overall scores above are computed only on the 193 prompts both models completed, so GPT's score is not penalized for these refusals — but the restricted prompt coverage is a real limitation.

Strengths and Limitations

GPT Image 1.5

Strengths

- +Leads overall (4.64) — wins more individual prompts (73 vs 68)

- +Stronger on marketing/branding, concept art, character design (wins 11 of 15)

- +Better anatomy and multi-view consistency for character turnaround sheets

- +Handles complex multi-element scenes (brunch restaurant, battle scenes)

- +Slightly cheaper ($0.133 vs $0.138)

Limitations

- −Refused 7 of 200 prompts due to content policy (anime, combat, dance poses)

- −Weaker on architectural visualization (wins only 3 of 10)

- −Can produce impossible geometry on complex objects (e.g. watch caseback views)

Nano Banana Pro

Strengths

- +Dominates architectural visualization (wins 6 of 10) and fashion (avg 4.70 vs 4.48)

- +Completed all 200 benchmark prompts — no content restrictions

- +Stronger material rendering (metal, vdx-card, fabric, food textures)

- +Better at following detailed structural specifications in prompts

Limitations

- −Slightly lower overall score (4.62 vs 4.64)

- −Weaker on multi-view consistency — details can shift between views

- −Most expensive model in our benchmark ($0.138)

The Verdict

Choose GPT Image 1.5 if...

You work with marketing materials, character design, concept art, or complex multi-element scenes. GPT handles text rendering, multi-view consistency, and human anatomy better.

Choose Nano Banana Pro if...

You work with architectural visualization, fashion editorial, or prompts requiring detailed structural specifications. NBP renders materials, interiors, and spatial layouts more faithfully.

Either way...

Both models score above 4.6 and produce excellent results on most prompts. The 0.023 overall gap is small — but on specific use cases the difference can exceed 1.0 points. Use VibeDex to check which model is best for your specific prompt.

Not Sure Which Model Fits Your Workflow?

GPT and NBP trade wins depending on the prompt. Describe your use case and we'll tell you which model scores highest for your specific needs.

Try the recommendation engineRelated Benchmarks

Both models also rank in the top 5 for text rendering — see our text rendering benchmark for scores across all 18 models.

Looking for a cheaper alternative? The Flux family comparison covers models from $0.001 to $0.070 with strong results.

Sources & References

All external sources were verified as of April 2026. Ratings and metrics reflect the most recent data available at time of review.

- OpenAI - Introducing GPT Image 1.5(openai.com)

- Google - Nano Banana Pro(blog.google)

- OpenAI - GPT Image 1.5 API Docs(developers.openai.com)

- Adobe - GPT Image Partner Integration(adobe.com)

- Microsoft Foundry - GPT Image 1.5(techcommunity.microsoft.com)

- Labellerr - NBP vs Nano Banana Comparison(labellerr.com)

- fal.ai - Nano Banana Pro vs Nano Banana 2(fal.ai)

- OpenAI - GPT Image 1.5 Prompting Guide(developers.openai.com)

- Artificial Analysis - Image Leaderboard(artificialanalysis.ai)

- Replicate - GPT Image 1.5 API(replicate.com)

Recommended Benchmarks

- Veo-3.1 vs Seedance-1.5: Is $2.68 Worth it?Is 0.3 points of quality worth paying 6x more? We break down the motion, audio, and consistency differences.

- Top 5 AI Image Generators for Text Rendering (2026)We tested 18 models on 26 text-rendering prompts. See which ones nail spelling, fonts, and legibility — and which fall flat.

- GPT Image 2 vs Nano Banana Pro: Premium Head-to-Head BenchmarkGPT-high edges Nano Banana Pro by 0.07 points (3.54 vs 3.46) on 29 common-set prompts. NBP at $0.138 also beats GPT Image 2 medium ($0.055) — but only by 0.10 points.

Related Vibedex Benchmarks

Veo-3.1 vs Seedance-1.5: Is $2.68 Worth it?

Is 0.3 points of quality worth paying 6x more? We break down the motion, audio, and consistency differences.

BenchmarksTop 5 AI Image Generators for Text Rendering (2026)

We tested 18 models on 26 text-rendering prompts. See which ones nail spelling, fonts, and legibility — and which fall flat.

Head-to-HeadGPT Image 2 vs Nano Banana Pro: Premium Head-to-Head Benchmark

GPT-high edges Nano Banana Pro by 0.07 points (3.54 vs 3.46) on 29 common-set prompts. NBP at $0.138 also beats GPT Image 2 medium ($0.055) — but only by 0.10 points.

Methodology: Rankings and scores in this article are based on VibeDex's independent benchmarks. Models are evaluated by AI-powered judges across multiple quality dimensions with scores weighted by prompt intent. See our full methodology

FAQ

Is GPT Image 1.5 better than Nano Banana Pro?

It depends on your use case. GPT Image 1.5 leads slightly overall (4.64 vs 4.62) and wins more individual prompts (73 vs 68). But Nano Banana Pro wins 3 of 4 quality dimensions — visual fidelity (4.99 vs 4.90), physics (4.66 vs 4.34), and subject integrity (4.51 vs 4.44). Instruction adherence is tied (4.63). NBP excels on raw image quality and material rendering; GPT wins on specific use cases like character design and multi-view consistency. Note that GPT was unable to generate 7 of our 200 benchmark prompts due to content policy restrictions.

Which is cheaper, GPT Image 1.5 or Nano Banana Pro?

GPT Image 1.5 is slightly cheaper at $0.133/image vs $0.138/image for Nano Banana Pro. The difference is negligible — about $0.50 per 100 images.

How many prompts were tested in this comparison?

We compared both models across 193 shared prompts covering photorealism, illustration, typography, product shots, concept art, and edge cases. Nano Banana Pro completed all 200 benchmark prompts, but GPT Image 1.5 refused 7 due to content policy restrictions. Scores are compared only on the 193 prompts both models completed.

What prompts did GPT Image 1.5 refuse to generate?

GPT refused 7 prompts: 4 involving detailed human figures in dynamic poses (combat, breakdancing, ballet, martial arts with anatomical detail) and 3 anime-style illustrations. These were blocked by OpenAI's content safety policies. Nano Banana Pro generated all 7 without issue.

Find the best model for your prompt

VibeDex analyzes your prompt and recommends the best AI image model based on what your specific image demands.

Try VibeDex →